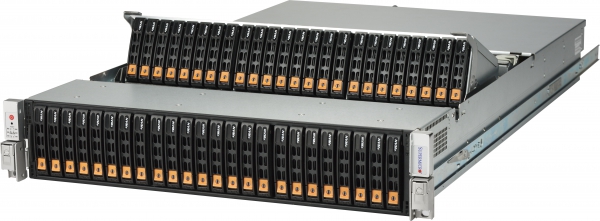

Big Data Server & Storage Solutions

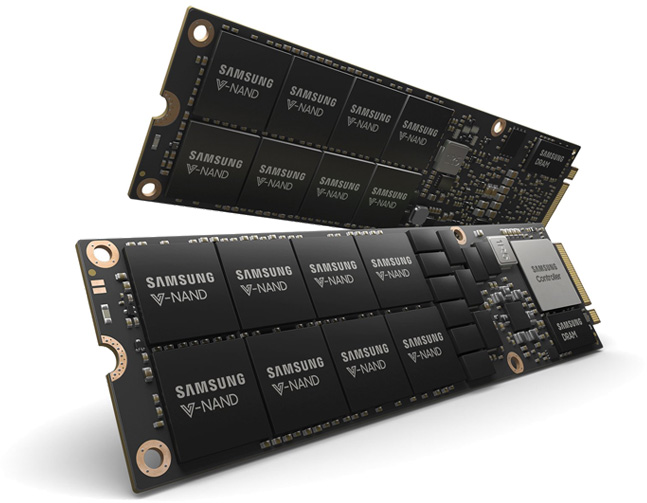

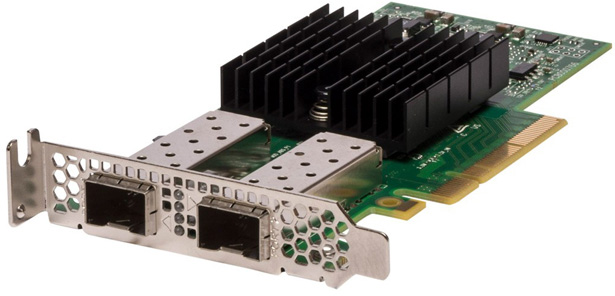

Broadberry Big Data server and storage solutions supercharge your Big Data applications through the latest hardware and software advances from PCI-E NF1 drives to Tesla GPU processing

More efficiency, more informed decision making and happier customers can all be a result of harnessing the power of Big Data.

Our Rigorous Testing

Our Rigorous Testing Un-Equaled Flexibility

Un-Equaled Flexibility

Call Our UK Sales Team Now

Call Our UK Sales Team Now